ARC-AGI-1 & ARC-AGI-2 Guide

Data Structure, Development & Approaches

This technical guide covers the ARC-AGI task format shared by both ARC-AGI-1 and ARC-AGI-2.

Contents

Data Structure

So you want to solve ARC-AGI? Let's start by exploring how its data is structured.

This material is also covered in the Explore ARC-AGI Data + Play tutorial video.

Note: ARC-AGI-1 and ARC-AGI-2 share the same structure.

Tasks

ARC-AGI tasks are a series of three to five input and output tasks followed by a final task with only the input listed. Each task tests the utilization of a specific learned skill based on a minimal number of cognitive priors.

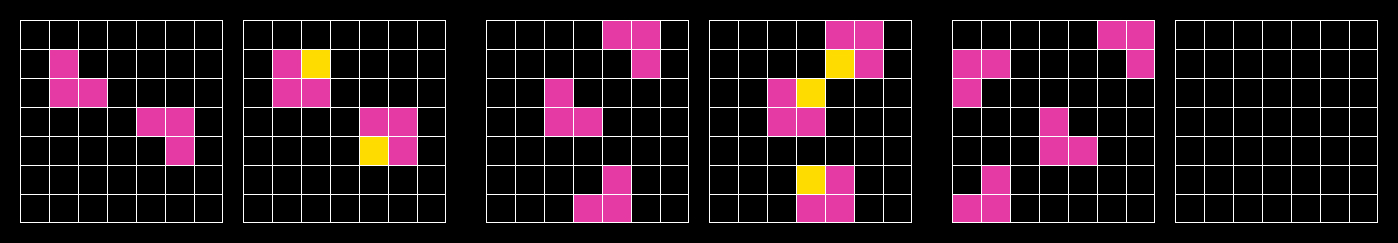

In their native form, tasks are a JSON lists of integers. These JSON can also be represented visually as a grid of colors using an ARC-AGI task viewer. You can view an example of a task here.

A successful submission is a pixel-perfect description (color and position) of the final task's output.

ARC-AGI-2

2025 introduces a new version of ARC-AGI, ARC-AGI-2. This is the same format as ARC-AGI-1. All conventions of public/private/semi-private data still apply. For more on the new dataset, see the ARC-AGI-2.

100% of tasks in the ARC-AGI-2 dataset were solved by a minimim of 2 people in less than or equal to 2 attempts (many were solved more). ARC-AGI-2 is more difficult for AI.

We recommend using the ARC-AGI-1 dataset for getting started and then moving to ARC-AGI-2 for more advanced solutions.

Task Data

The following datasets are associated with ARC-AGI:

- Public training set

- Public evaluation set

- Private evaluation set

Outside of the competition, there is also a semi-private evaluation set used for the public leaderboard. Learn more.

Public

The publicly available data is to be used for training and evaluation.

The public training set contains 1,000 task files you can use to train your algorithm.

The public evaluation set contains 120 task files for to test the performance of your algorithm.

To ensure fair evaluation results, be sure not to leak information from the evaluation set into your algorithm (e.g., by looking at the tasks in the evaluation set yourself during development, or by repeatedly modifying an algorithm while using its evaluation score as feedback.)

The source of truth for this data is available on the ARC-AGI GitHub Repository, which contains 1,120 total tasks.

Semi-Private

The semi-private evaluation set contains 120 task files.

The Semi-Private Evaluation set is 120 tasks which are privately held on Kaggle. These tasks are used for the intra-year competition standings. These tasks are not included in the public tasks, but they do use the same structure and cognitive priors.

These tasks are also used to measure public, closed-source model performance.

Private

The private evaluation set contains 120 task files.

The ARC-AGI leaderboard is measured using 120 private evaluation tasks which are also privately held on Kaggle. These tasks are private to ensure models may not be trained on them. These tasks are not included in the public tasks, but they do use the same structure and cognitive priors.

Please note that the public training set consists of simpler tasks whereas the public evaluation set is roughly the same level of difficulty as the private test set.

Set Difficulty

One of enhancements made with ARC-AGI-2 is the introduction of a difficulty calibration. Private Evaluation, Public Evaluation and Semi-Private Evaluation sets are now calibrated to be roughly the same difficulty (<1pp) as measured by human & AI performance.

Format

As mentioned above, tasks are stored in JSON format. Each JSON file consists of two key-value pairs.

train: a list of two to ten input/output pairs (typically three.) These are used for your algorithm to infer a rule.

test: a list of one to three input/output pairs (typically one.) Your model should apply the inferred rule from the train set and construct an output solution. You will have access to the output test solution on the public data. The output solution on the private evaluation set will not be revealed.

Here is an example of a simple ARC-AGI task that has three training pairs along with a single test pair. Each pair is shown as a 2x2 grid. There are four colors represented by the integers 1, 4, 6, and 8. Which actual color (red/green/blue/black) is applied to each integer is arbitrary and up to you.

{

"train": [

{"input": [[1, 0], [0, 0]], "output": [[1, 1], [1, 1]]},

{"input": [[0, 0], [4, 0]], "output": [[4, 4], [4, 4]]},

{"input": [[0, 0], [6, 0]], "output": [[6, 6], [6, 6]]}

],

"test": [

{"input": [[0, 0], [0, 8]], "output": [[8, 8], [8, 8]]}

]

}

Development

Download

Download the ARC-AGI-2 data from the official ARC-AGI repo on GitHub.

View

There are multiple ways for humans to view the data:

- Testing interface on the official repo (instructions)

- The arcprize.org task viewer

- Community-created apps

Test

There are two ways to measure your progress on ARC-AGI tasks.

-

Correct / Incorrect: This evaluation method measures whether or not your model's output answer is identical to the validated solution. This means that the output shape, colors, and positions match. You can view scoring algorithms on Kaggle & Github.

-

Pixel correctness: The number of pixels that are correctly identified as a % of the total. Some teams use "Pixel Correctness" as another indicator for their score. It can give more information about how your results are performing.

Approaches

You're free to explore any path you like, but we'd love to save you time by catching you up on the solution approaches that have led to the current state of the art. Join the community discord to find out more from people who have been working on ARC-AGI for years.

1. Discrete program search

This was the first domain of solutions that started working well in the original ARCathon competition in 2020 hosted by Lab42. It involves searching through a massive program space in a discrete, step-by-step manner.

2. Ensemble Solutions

This approach consists of piecing together existing publicly available solutions to correctly answer more tasks than any solution achieved alone. This is the approach that was used to get to the current high score.

One thing to consider in utilizing this approach: it's unlikely that an ensemble approach will be able to generalize to correctly solve tasks outside of the public datasets. If you've got your eyes on the Grand Prize, you'll want to create new and novel techniques.

3. Direct LLM Prompting

In this method, contestants use a traditional LLM (like GPT-4) and rely on prompting techniques to solve ARC-AGI tasks. This was found to perform poorly, scoring <5%. Fine-tuning a state-of-the-art (SOTA) LLM with millions of synthetic ARC-AGI examples scores ~10%.

"LLMs like Gemini or ChatGPT [don't work] because they're basically frozen at inference time. They're not actually learning anything." - François Chollet

See templates for fine-tuning Llama 3b, open source LLM (without fine-tuning it), and using frontier models (Video tutorial, ARC-AGI-Pub only).

4. Domain-Specific Language (DSL) Program Synthesis

This approach involves developing a domain-specific language (DSL). The DSL is designed to encapsulate common concepts such as rotation, mirroring, and other grid transformations that frequently occur in ARC tasks. By defining a set of primitives or basic functions that perform these transformations, solutions can be synthesized by composing these primitives into programs that solve specific tasks.

Program synthesis in this approach involves searching through possible compositions of the DSL primitives to find programs that correctly transform input grids into their corresponding output grids. This search can be brute-force or more sophisticated, but the key idea is to leverage the DSL to build task-specific programs efficiently.

See Michael Hodel's example notebook with this approach.

5. Active inference

More recently, solutions using pre-trained large language models (LLMs) have been attempted. The LLMs are additionally trained on code data, ARC-AGI data, and because there aren't enough ARC-AGI tasks, you'll augment this with synthetic ARC-AGI-like data.

The trick to making this LLM based solution work is using active inference. This is the idea that when you're presented with a test task demonstration examples, fine tune the LLM on those examples. Of course, because there are only a couple of them, you'll need to expand them artificially to have enough data points to fit your curve.

This unlocks the performance that we see with top solutions. Jack Cole's 34% solution utilizes this approach.

"The fact that this technique has an outsized impact is really interesting" - François Chollet

Guidance

Let's hear from François, the creator of ARC, about what he sees as the most promising approaches as well as general tips to help you compete in ARC Prize.

Promising Approaches

François believes that the most promising category of solutions is one that we haven't really seen in practice so far. His thought process...

Discrete program search works really well. This is probably the easiest way to to solve ARC-AGI tasks. Now we also know that LLMs can develop good intuition about how to solve ARC-AGI tasks. The next step is going to be to augment discrete program search with deep learning driven intuition.

When you're doing discrete program search, you have to sift through this massive program space. The problem you're facing here, of course, is combinatorial explosion.

If you manage to get a [deep learning] model that has a pretty good sense of what an ARC-AGI task and solution is supposed to look like, then you can use the deep learning model to provide suggestions as to where to try next or what a sketch of your solution program look like.

This is a category of approaches that a few people have tried. I'm very convinced that this is the domain from which you're gonna see the highest quality solutions.

Here more on this approach from Francois with Dwarkesh.

General Tips

- Focus on skill acquisition and generalization: The key idea behind ARC-AGI is that each task should be novel and not solvable by simply memorizing previous examples.

- Take inspiration from human cognition: François suggests looking to cognitive science and developmental psychology for insights. For example, the idea of "core knowledge" - a set of innate priors like objectness, numbers, geometry that underpin our ability to learn more complex concepts.

- Embrace hybrid approaches: François believes a hybrid approach combining symbolic and neural methods is promising. He gives the example of how humans solve ARC-AGI tasks - we consciously reason step-by-step (symbolic) but also rely heavily on unconscious intuition to quickly prune the search space (neural). Finding ways to combine the two could lead to a breakthrough.

- Aim for generalizable abstractions: A successful ARC-AGI solver needs to be able to form novel conceptual abstractions to tackle never-before-seen tasks. François suggests trying to make your system's priors/knowledge representation easily swappable and generalizable, rather than overfit to a particular domain. The faster your system can form useful new abstractions, the better it will perform.

- Start small and scale up: François suggests that the first "ARC-AGI solving" system doesn't need to be a full-fledged AGI from the get-go. A narrow AI system that can handle ARC-like problems in a constrained domain could still be a major breakthrough. Once you have a system that can efficiently learn and generalize in one domain, you can scale it up to more knowledge and problem domains over time.

- Don't be afraid to try something new: Since ARC-AGI is still a relatively new and unexplored benchmark, François believes there are still lots of low-hanging fruit to be plucked in terms of novel approaches. Don't be afraid to try radically different ideas from what's been attempted before. Intellectual creativity and originality can go a long way.